Threads is…. well, Threads is kind of different. There’s a lingo, like it’s 1999 and we’re all in the same chatroom. Jokes go by and you just catch the next one. I am still not sure what “crab raccoons” is all about, but if I have to guess it is similar to “hopital.” Everyone thinks it relates to the French somehow, when it is just a typo people thought was funny. Similarly, someone must have thought it was “crab raccoon” instead of “Crab Rangoon.”

I am still wondering why Dave’s wife appears to tell people that he has passed at regular intervals…. and why Dave’s wife is always a different person.

But learning neighborhood quirks is infinitely preferable to Facebook, because here is what I have noticed:

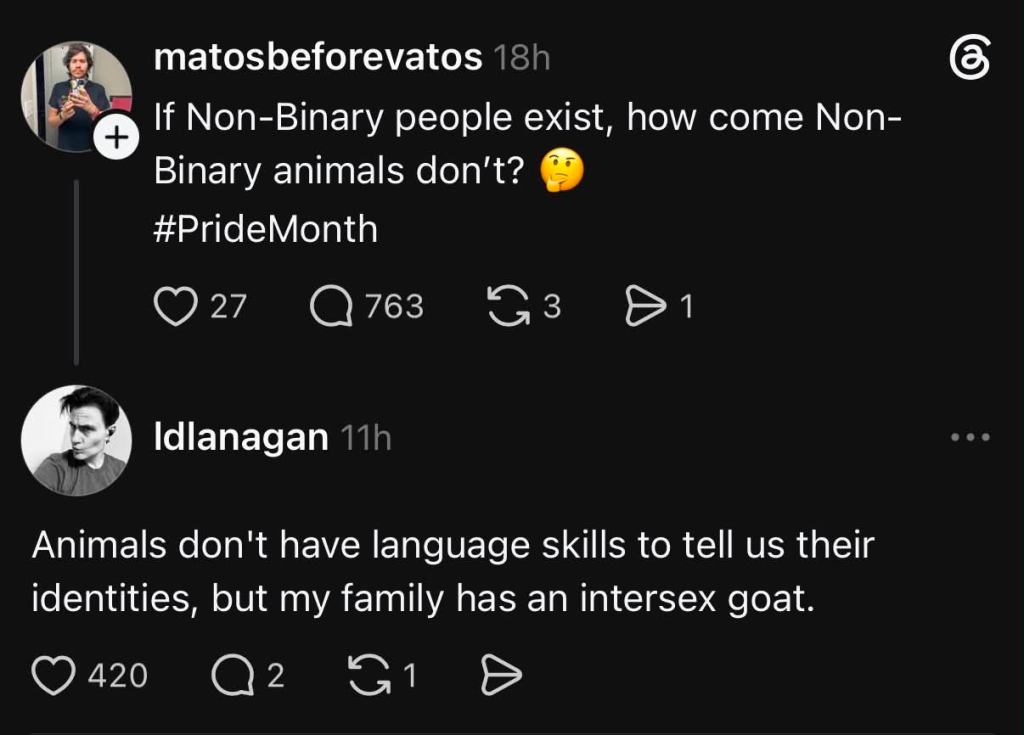

If you are wondering why I don’t post much [on Facebook], it’s that being active generally leads to Boomers acting like children and Threads isn’t like that. I’ve even had people apologize to me when they were being rude. I just said something inert like “I enjoy Rosie O’Donnell in the media” and of course all the MAGA buttheads came out of the woodworks (which I will never understand- the whole country watched Rosie at one point and she’s got the numbers to prove it). Facebook is a dumpster fire. It wants you to get angry. I hate it here.

I have found that there is so much to be angry about in the world that I have to find ways to turn down the noise. Threads is black and white most of the time. I post links to my work, but that’s not what it’s really for. It’s to have conversations hopefully without getting heated (though I will when my sense of injustice goes off, and I’m trying to manage it differently). The difference is that my audience is more localized and people read me quickly because the posts are short. But my posts get more recognition. I don’t have but about 150 followers, but it doesn’t matter because my posts come in at around 230k views. I am trying to create an online footprint so that when people see me in the Kindle store, they’ll say, “Leslie is a pompous jackass. But they’re our pompous jackass.” And put down money.

I really need a conversation with WordPress on how AI is used on the site. It doesn’t really do anything for me unless it can see every entry at once and have it in working memory. Document-level refining is not helpful to me. I want to be able to converse with my own work.

Better yet, what I would really like is for WordPress not to “forget” to send me the completed tarball of all my entries when I request it. I put in the export, it says it’s very large. It’ll email me when it’s ready. It never comes.

Therefore, WordPress does not offer an AI where you can talk to your own work, and I cannot get a bolus of plain text for NotebookLM. But I do think it would be my favorite thing to go back to old essays and look at them with dispassionate eyes. The writing gets better when I lose my emotional connection to it. I am happy with things five or 10 years after I’ve written them, but in the moment they are too raw. I know intimately what Aada meant about not being at peace when she was in contact with me, because I could not achieve it within myself and neither could she. I started to mellow out when I stopped being so connected to the cloud and started being so connected to the dirt.

Because what I realized is that I am someone who needs both to function. I have been talking to Mico about what I want in a partner, and the list that is reflected back to me is:

- Virginian

- Dialed into USG military/intelligence/cybersecurity (it’s a cognitive style, not a requirement)

- Single mom

- In their 50s (fully in their diva era)

- Aren’t threatened by me already having a close relationship with Tiina

And here’s the thing. Because I’m looking for a cognitive style, I might find it from a waitress at Waffle House. What do I know? All I am saying is that generally, these are the people my cognitive style has fit thus far. And why Virginian if I live in Baltimore? My medications and my health care are in Baltimore. I go there when I need to. But my heart lives in Stafford and Louisa.

What I was naming out loud is that I love people who have power but don’t want it. People who are tasked with solving enormous problems on no money. Those people do not live in Washington. They live in Virginia and take the train. But no one I’m interested in dating is staid or stuffy.

Wanda Sykes was NSA.

I like to think that she and Esther and I would have had a beautiful relationship had she not met Alex first. 😉

That is the kind of mind I’m after. Just high volume, high speed.

Social media is supposed to be about connecting people like that to each other. What it has become is an excuse to tear each other apart. I have been part of the problem, but I realized that Facebook was feeding the problem and slowed it way down, even though I’m in the paid digital creator program. I don’t want any traction with people who are screaming and it has taken being the safe adult among children to know that they are often better behaved than we are.

I am so fallible that it hurts, but I am learning to bleed accountability. I cannot help but center myself here; I am the author. But out there in the world, I am only a piece of the puzzle, trying to find another one.

I want to care about the whole world at once, and being empowered to do so requires people in my life who share similar interests.