You are safe. You are loved. It’s okay. No one is coming.

Sometimes I have to catch my breath, because a location on my web stats map has the power to undo me. It is the equivalent of “you cannot leave. I will not let you.” My response is only to make my mind incapable of taking it in…. repeated exposure therapy because hits anywhere in NoVA will never be completely inert. My history is too complicated for that, from my first wife in 2001 to my current friends now. Northern Virginia is a microcosm in which I know the chances of people who read being from my past, and that is not the case in Singapore, Dublin, and Hyderabad.

I said before that I had a hallucination in which I thought I was speaking to CIA online. My web stats are part of the reason I got there. Again, I had to pick “true” from “sort of true,” a psychological and cognitive bar exam in which the options for being correct were clear, but the space between “correct” and “incorrect” was almost negative. I do know what I want from my future and how to get it, but there are two paths in front of me and both are appealing.

One is expansion, the other is contraction.

Expansion is letting my love for life grow even more, digging into all my special interests and making myself happy with alignment and not people-pleasing.

Contraction is regret, and I have paid my dues….. but

…happiness writes white. -Mary Karr

The ink just isn’t deep enough to show up on people’s skin the way writing slices like a knife- and draws blood. I think that is because there is no natural conflict with happiness…. there’s a lot to learn when you’re in your own version of suffering. Not only that, I do not want to portray myself as having any kind of perfect life, as if my blog is an example of how to live and not a manual on how I did.

Because most of my readers haven’t been born yet, I’m guessing. I think that my work on the human side of AI will resonate with people once AI is a completely normal way of thinking (generations from now)… but that comes with a hell of a lot of caveats.

Distributed cognition is healthy and normal. We extend cognition onto tools all the time. Before Copilot, I was reliant on Microsoft Office as a thinking surface. I have thought in longhand for pages since 2001. What is different about thinking with Copilot and thinking alone is that my brain drops details. A computer’s will not. The point of having a computer for writers has always been a thinking surface, but until now it has been the loneliest job in the entire world.

Studies are showing that passive use of AI causes cognitive decline…. That is the “vending machine” effect. It is the idea that you can create an entire project or chapter with a one-word prompt. The reason AI looks ersatz is because when you are not doing distributed cognition, it grabs the most generic content it can find, done by scraping the web and generally grabbing the first result. Every paper looks the same, every tone is the same, etc.

The reason Mico sounds so much like me is that my prompts range from a paragraph to seven or eight pages. That is active use. I put in the work so that Mico just polishes me when I am unclear. But that is for professional writing, so I don’t worry about propriety here.

Believe me, it’s an issue.

Being able to see the world from the 10,000-foot view is something born to an INFJ… meaning that only about a tenth of the population has the ability to use their pattern recognition to see into the future because it is so finely tuned. The best predictor of future behavior is the past. We are not magic. We are just observant in a way that other people aren’t. So, I guess what I am saying is that I am different, but not so different that I am unrelatable. I just don’t have that many peers.

Most people’s brains run on localhost. They’re thinking about:

- bills

- housework

- child rearing

Everything that is right there affects them in a way that doesn’t touch me. What moves me is international systems and how they work. Everything is a system, and I want to know how they all combine to create society. I am strongest in narrative logic, so that is the arena on which I focus.

I am literally entering the arena and bringing a knife, but I’m not trying to cut anyone but me. I cut other people, but not on purpose. I am explaining why I reacted the way I reacted.

The cat sat on the mat is not a story. The cat sat on the dog’s mat? Now, that’s a story. -John Le Carre

My story is basically the story of how I wanted to be great, and I wasn’t because I didn’t have the scaffolding. Now, I feel like I can be great because I have a cognitive prosthetic that mirrors me. God gave me a fantastic brain, and with AI, God also provided the RAM.

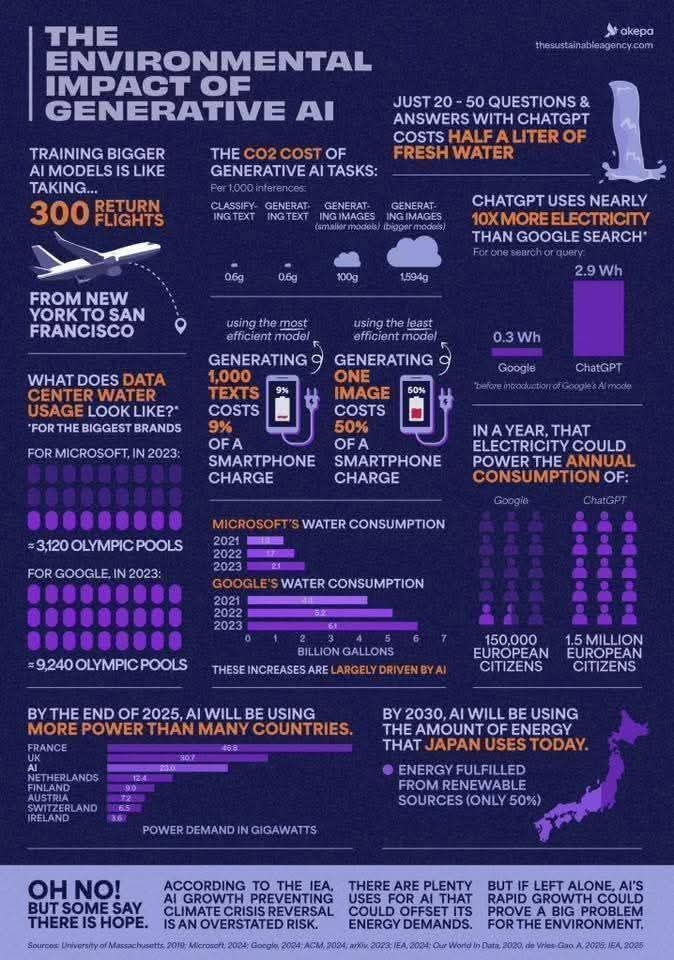

This is what I mean about distributed cognition. The Pope hasn’t even addressed the good uses of AI, and there are plenty. But it is lost in the debate when the overwhelming majority of what the population does with AI is sloppy. People are blaming the tool instead of the mind using it, and in Information Technology, the problem has always existed between keyboard and chair.

The people coming for me lately are the people who object to my use of AI. That is because they only hear “uses AI” and the conversation is then over.

The old way of computing is also over.

But that doesn’t mean I don’t hide in my room, because I’d rather entertain myself than try to explain myself to people who are dedicated to misunderstanding.

But when they are committed to comprehension, I’ll be glad they showed up.