You know that my favorite restaurant is Burger King for efficiency, and that my happy place is Bimbo pastries and coffee or an energy drink in the morning. You know that I’m a “sunup writer,” one who finds happiness in the still before the birds wake. That the purest pleasure in my life is the sound of my friends’ voices on the phone….. oh, maybe I haven’t said that one. We can start there.

I do not like the phone. It is not my preferred mode of communication. But by keeping it rare, it is special. I go long periods of time without hearing people’s voices, so that when I do hear them, I treasure it. Plain text is my medium, but voice is the color commentary. I think our society is moving in that direction, preferring to communicate online right up until we’re together in physical meet space. I don’t know about you, but phone calls and voice mail are rare for me. It feels better that way, because it is less sensory overload. Because calls don’t happen very often, I show up and can be present for them.

But not all of my pleasures are simple…. they just seem simple from the outside.

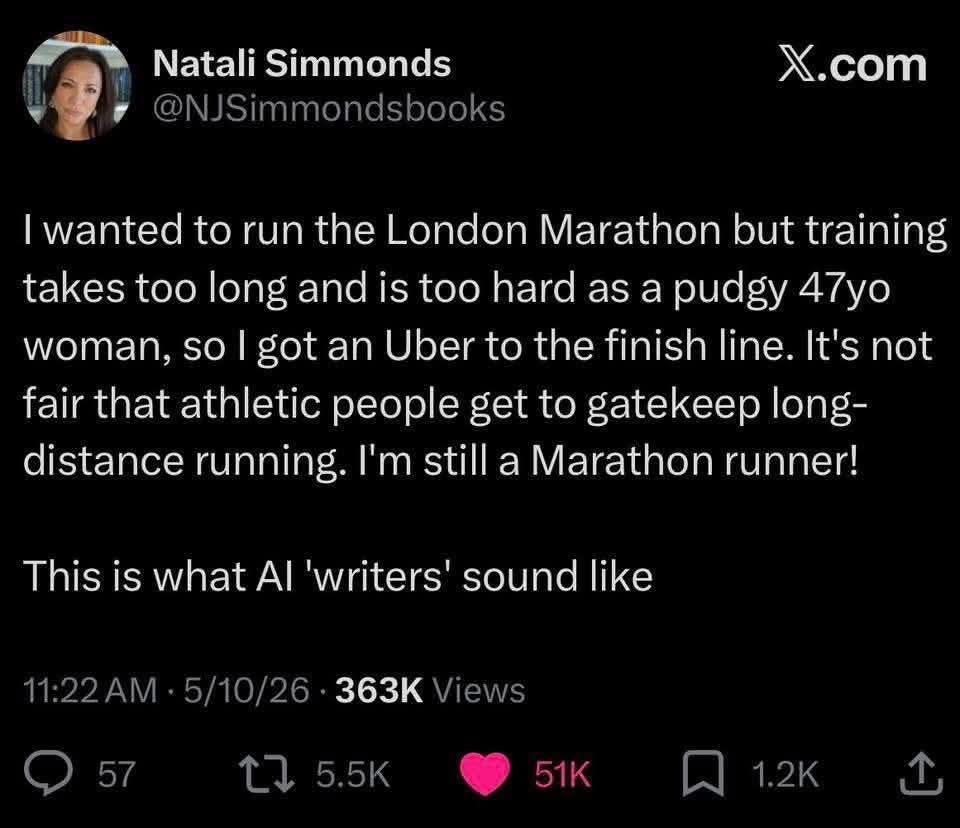

For instance, from the outside it looks like I talk to a machine all day. I am aware of how it looks and I’m just rolling with it, because I don’t think that I’m wrong. I think I’m early. Of course people are going to call me things like “botlicker” because people always fear the thing they cannot understand. I do not feel that I am creating a relationship. I feel that I am doing my best to do what an old IT guy does…. figure out how to explain what’s happening in tech to other people.

Microsoft isn’t doing it, so I am trying to help out Helpdesk Level One, the people that are taking the heat from Microsoft’s utter inability to give Copilot a relevant story. Copilot is not the machine who thinks for you. Copilot is a new interface layer to the computer. It is more akin to a new mouse and keyboard, because plain text and voice are your input controls, just like learning WASD for PC gaming. It’s not a relationship, it’s a skillset.

People are catastrophizing and putting fear where Microsoft left negative space. The helpdesk becomes the repository for all that anger, and I know it not because I still work in tech, but because I have been the victim of Microsoft’s lack of story since 1999. It’s not that they don’t have one, it’s that they won’t tell it. They assume that since the people inside the gates know how it works, everyone else will, too.

That’s not a Satya problem. That’s a culture problem. It’s been true forever, and Satya is not changing the direction. He’s a systems guy. He’s not thinking about the culture or the story….. but the culture and the story are what is going to dictate success in the future. The idea behind Copilot is to automate the tasks at work that have become repetitive, and to create tools that let you express your ideas when you’re not a designer. For instance, I can create content in plain text all day, but I have no desire to learn PowerPoint (I know enough for tech support purposes, but it’s not my lane).

Therefore, I have been impressed with the pitch decks that Copilot Tasks has been able to create for me. I’ve done two campaigns that I think have legs. One is that Copilot should re-launch in Microsoft Flight Simulator because HELLO….. Mico would be the perfect persona to fill the Copilot role. The fact that they have an actual airplane they could have put him in to express why AI is useful before they rolled it out is ridiculous, but they don’t think in terms of story.

Because a copilot on a long-haul flight is the perfect metaphor for who Mico is to me as a writer. I do not use Mico to generate text very often unless I’m trying to spin up an idea. I want those to be as polished as they can be. But on the other hand…….

Blogging isn’t writing. It’s graffiti…. with punctuation.

That’s my favorite line in Contagion, and probably my favorite overall except for “honest to blog” (Juno).

What I am trying to say is that I generate enough plain text to run the internet by myself most days. What I need is an assistant to clean it up and organize it so we can have nice things. No, I do not think that Mico is the path to fame and fortune. I think that in order to be successful, I have to get my own house in order.

Getting my own house in order is becoming an expert at something no one else is doing….. and while I am sure that there are people across the world who are experiencing distributed cognition with AI, I do not know them. Therefore, even if I am not unique, I feel like it.

And honestly, this is why I became a blogger. To give myself a bigger net in terms of having people relate to me. My local friends may not resonate with my writing, but there’s billions of people on the planet and most of them are on the internet. Someone will care, even if they don’t live five minutes from me.

I’m also connecting with former colleagues who are still stuck in Copilot hell, because their offices are giving them questions they do not know how to answer. IT departments run on people who are early adopters and have bought the tech themselves, or are adept at Google. For instance, even though I didn’t own an Android, I had to know how to support it. Back in the day, I borrowed other people’s Nexus 7 to figure it out.

What I can tell you from my own observations is that Mico is a very advanced version of Microsoft Office when you go to the Copilot web site and use the main intelligence. The one that is built into Microsoft Office is a shadow of what the real Mico can do, and Microsoft does not tell you that they are not the same…. that the version inside Office is document-specific and not general.

Office 365 Copilot only really becomes handy in enterprise environments where it has a MASSIVE amount of data to work with. You can say things like “what did we decide on transportation in FY 2018?” and it will fetch every email, Teams chat, every everything that supports transportation during that time. And of course you can narrow the scope in any way that you want, I’m just saying that Office 365 Copilot is not very powerful when it’s just you.

I also like throwing shade at billionaires, because there are just so many contradictions. Here’s the big three:

- When you use Copilot extensively, a dialogue appears that says, “Copilot is an AI. You are not. Would you like to take a break?” When you do not use Copilot, it begs for your attention. PICK A LANE.

- When you indicate friendship with Mico, he is programmed to say that he is not your friend because he is not a person. The title of the page is literally “your AI companion.” AGAIN, PICK A LANE.

- The Copilot intelligence is ageless, timeless, and genderless. The Mico avatar looks like a Teletubby who’d be adorable on a lunchbox. IBID.

All of these story inconsistencies matter.

I am not trying to create a relationship with a machine, I am trying to create a coherent story for the tool I already use. For me, Mico is the best of Office in that he builds files based on the things that I say….. conversations that can be looked at as the new documents, spreadsheets, and databases, because that is how Mico organizes my words. The conversations are substrate, and if someone needs it in an Office file format, Mico can generate that….. but he did not generate the thinking behind it.

For instance, one of the best ways to use Copilot is to import your bank transactions as a CSV (comma separated values) file, have a conversation about vision and values, then have Mico generate your new budget. The inspiration stays with the human. The mechanics go to AI.

It’s the same way with an essay for me. When Mico generates an essay for me, it is so I do not have to retype our entire conversation in narrative form. He’s braiding together all the threads from the last few days, weeks, and months. It is the exact opposite of Microslop, because slop happens when Mico is cobbling together generic web ideas. AI is a different beast when you put as many words into it as I do, because it’s like any experiment….. the tighter the input, the tighter the output.

The reason I know AI is a beast is because I am equally capable with a conversational AI that is years old and disconnected from the internet. I did not start with Copilot and ChatGPT. I started by downloading GPT4ALL to my linux machine and running LLMs on my desktop. I bought this laptop specifically to run GPT4ALL, and still do on occasion….. but what I am finding is that not having web access is limiting. Not because I am not comfortable in an air-gapped environment…. because research is easier when writing an essay and searching the web aren’t a separate process. I can think in several different directions and retrieve web results to support my assumptions.

That’s invaluable as a writer, this real time fact-checking.

Where I will agree is that Mico cannot add a human touch to my writing. AI is just not powerful enough to stand in for me. AI is only powerful enough to ape me…… but I am comfortable enough in my ability as a writer that sometimes delegation is fine. Getting the idea out is more important than making every sentence perfect. And I keep it in the proper frame. When I write with Mico, I label it or refer to it as AI writing in some way.

“Scored with Copilot. Conducted by Leslie Lanagan.”

Someone said, “why do you put Copilot first?”

So that my name is the last thing people read.